Thinking in Fundamentals

Meaning, Attention and Hierarchy

Understanding 3 fundamental AI research ideas, and using them to think about AI for real world problems.

I promise not to make any mention of Generative AI or Agentic AI in the rest of this article. Many are probably tired of all the handwaving and shrill hype in the recent 2-3 years. Me too.

I offer no claims of how AI is going to change your life, take away your jobs, or fulfill your science fiction fantasies.

Nonetheless, AI is going to affect us. Hence, it’s useful to understand the key concepts that make it work intuitively. Such understanding could also help us use it better. This underpinned my Thinking in AI and Thinking in Networks series.

For this article, I would like to spend some time on 3 concepts I shared recently - meaning, attention, hierarchy.

I'd shared posts on 3 research diptychs – pairs of research papers on AI, years apart - on these fundamental concepts. I won’t repeat the posts here, but will try to provide an intuitive recap of these concepts.

Representation

‘Word2Vec’ (2013) showed that we could use AI to learn vectors (a series of numbers) that have meaning. ‘Persona Vectors’ (2024) by Anthropic extended this to personalities of Large Language Models.

Here’s the thing. For AI, everything is represented as numbers and math. The word "cat" might just be [0.2, -0.5, 0.8, 0.3...]. There’s no sentience, but these numbers capture meaning (or semantics).

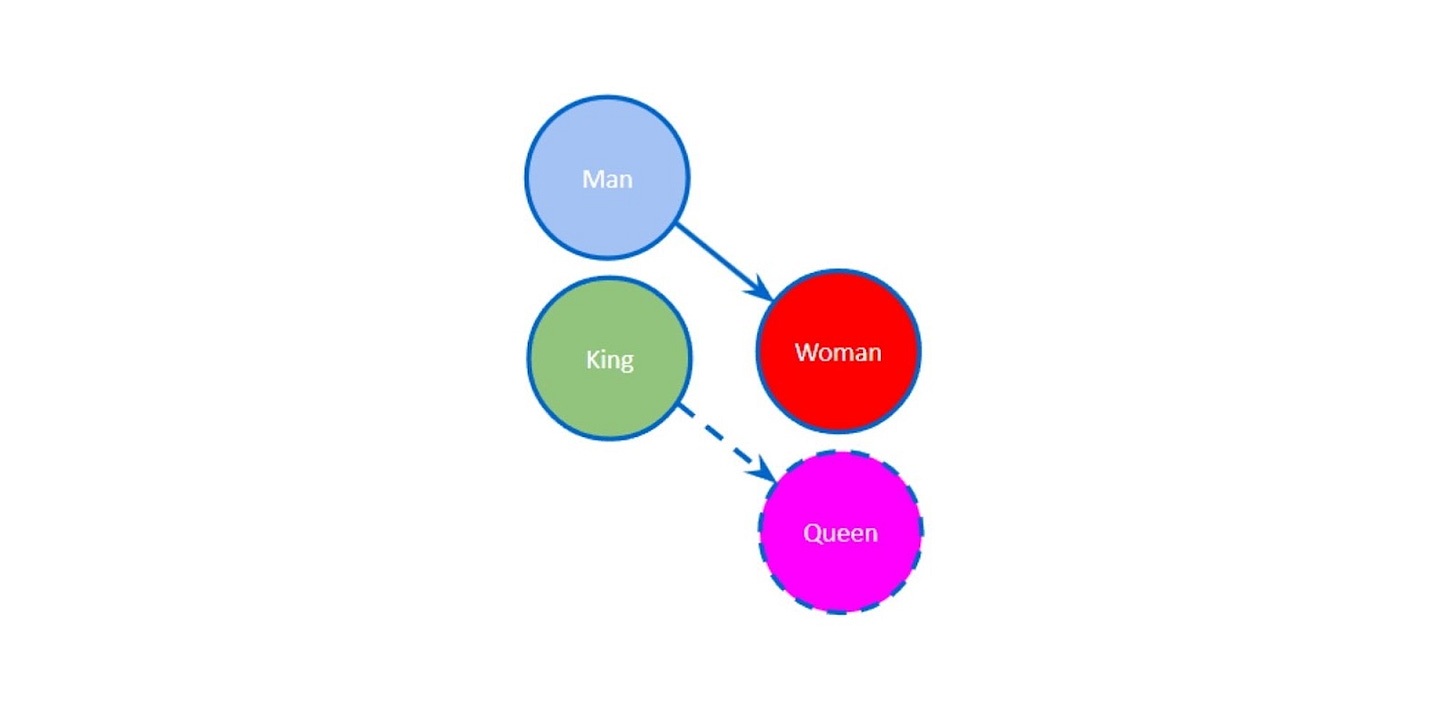

Think of what ‘Word2Vec’ does as learning a map. Not of locations, but of concepts. "King" is conceptually located between "royalty" and "male." "Queen" is the same distance from "royalty" but in the "female" direction. You could map personalities to such spaces too. Anthropic’s ‘Persona Vectors’ took this further. What if personality traits were similar? What if "helpful" was a direction you could move towards? Like adjusting bass and treble on a speaker, we could adjust an AI's behaviors.

Attention

‘Attention is All You Need’ (2017) gave AI the ability to attend to everything at once. ‘Gemma 3’ (2025) showed a shift towards more efficient approaches by alternating between deep local focus and broad awareness.

You're reading this sentence right now, but you're not processing each word equally. Your brain emphasizes certain words while maintaining awareness of the whole. This is attention.

Before ‘Attention is All You Need’, AI read text like someone forced to play telephone – processing one word, passing it forward, hoping the meaning survived. But by the end of a long sentence, the beginning was often forgotten.

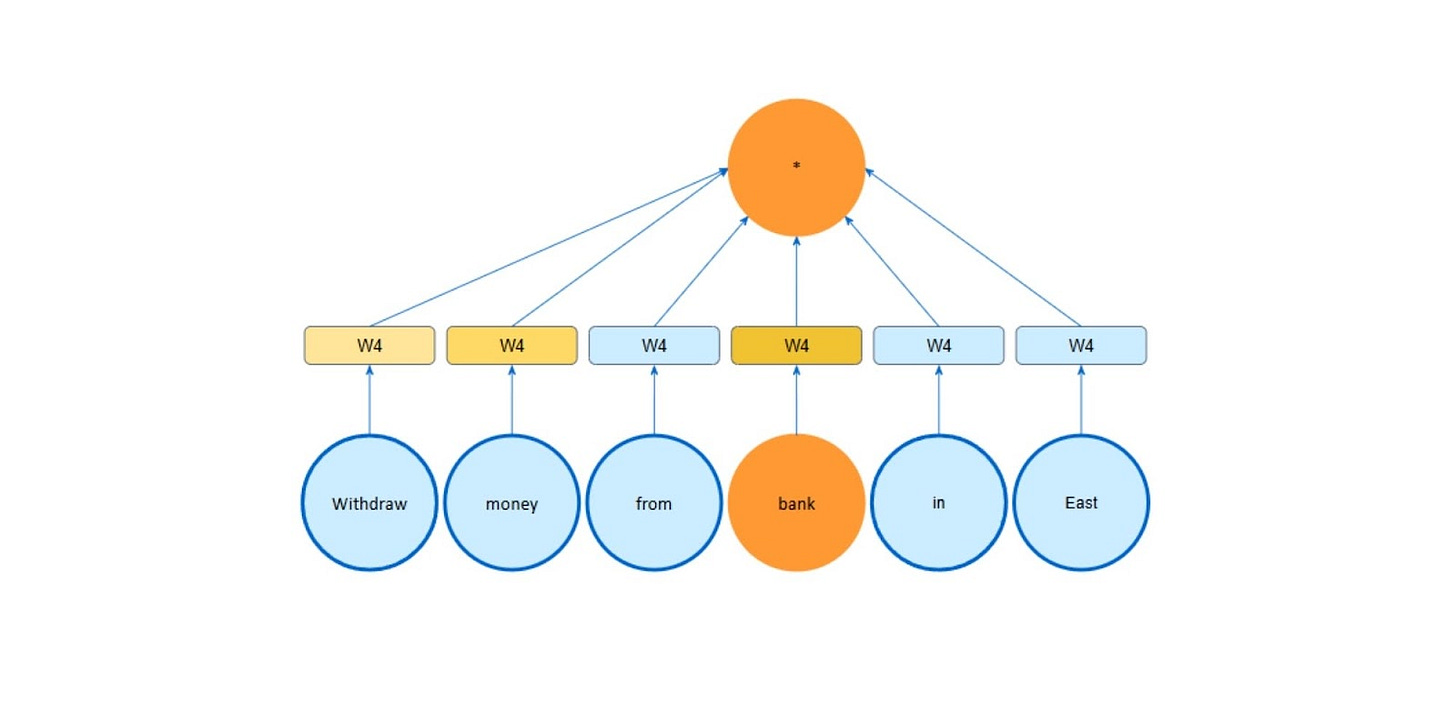

The attention mechanism meant that AI could see all words simultaneously and decide which ones matter for understanding each part? But there was a cost. Tracking how every word relates to every other word is like trying to remember every conversation at a massive party.

Recent evolutions of the attention mechanism, such as those in ‘Gemma 3’, are surprisingly human and simple. Just don't maintain awareness of everything all the time. Similar to reading, focus on the current paragraph while vaguely aware of the chapter's theme. Periodically, zoom out to connect ideas.

Hierarchy

Geoffrey Hinton (2021) hypothesized that AI would work better if it thought in hierarchies. ‘Hierarchical Reasoning Model’ (2024) answered as a surprisingly small model that outperformed giants by thinking hierarchically.

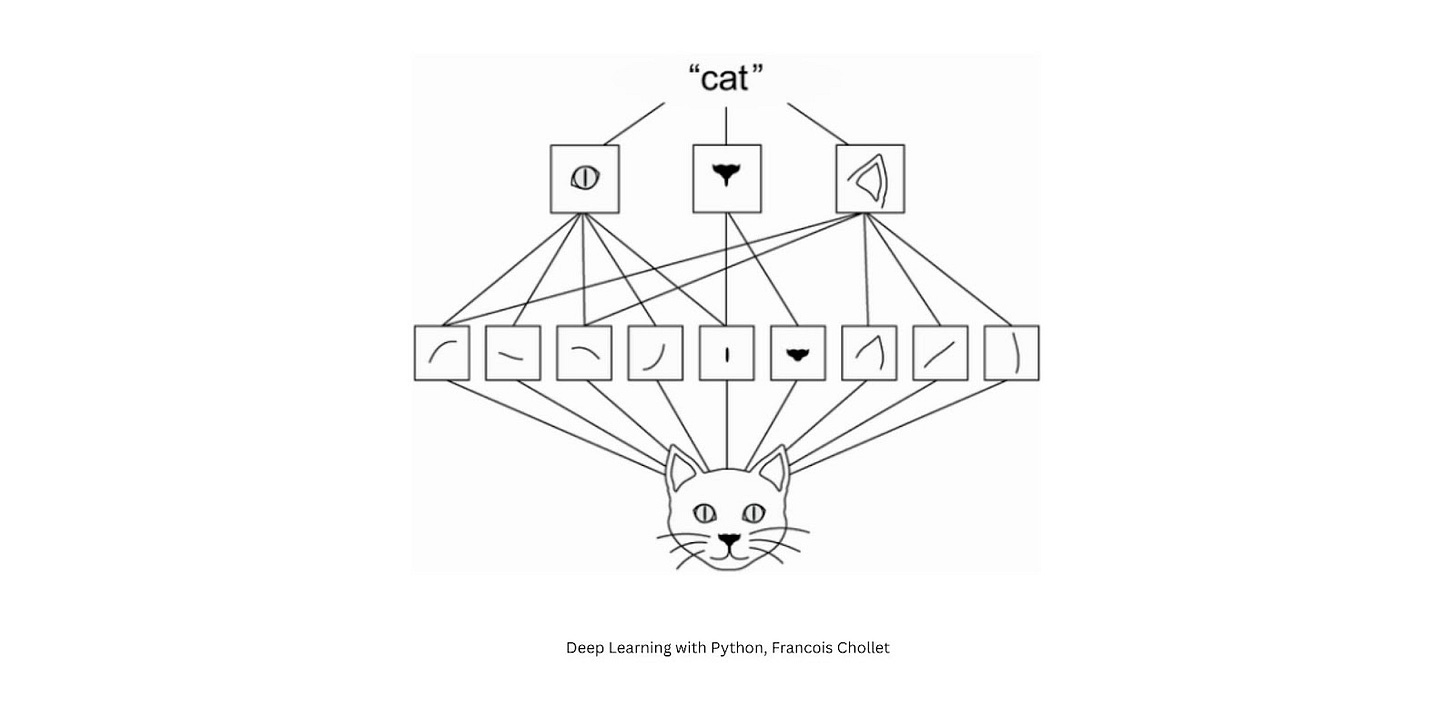

You don't see a forest as 50,000 individual leaves. You see a forest, then trees if needed, then branches, then leaves. This is hierarchical thinking – understanding wholes and parts, and knowing when each level matters.

Hinton's question was deceptively simple: how do you teach a machine to see both the forest and the trees, and how they relate to each other? This allowed the ‘Hierarchical Reasoning Model’ to be a small but powerful model. It's like learning the rules of grammar instead of memorizing every possible sentence. Way more compact.

Extending these Concepts

Now, let's talk about how these concepts could be used to change the way we think about using AI.

Extracting Meaning from Your Data (Representation)

We often treat our data like a filing cabinet – everything categorized, stored based on surface level labels. But what if your data could tell you what it means, not just what it is?

Remember those vectors that turned "King - Man + Woman = Queen"? The same principle can apply to everything you have. Your customer isn't just “John who bought my product 3 times." They're a point in space defined by intent, preference patterns, and behavior trajectories.

Meaning allows us to go from "How do we better organize our data?" to "How do we better understand the underlying meaning of our data?" Every document, transaction, and interaction can be a coordinate in the space of meanings.

Similar things cluster naturally. Relationships emerge systematically.

Capturing the Right Context (Attention)

Your AI doesn't need to know everything all the time. It only needs to attend to what is necessary and relevant.

Think about a doctor diagnosing a patient. They focus intensely on current symptoms while selectively recalling relevant medical context. They don't review every single data point since birth. This is attention.

We sometimes fail to remember this when we use AI. We either starve the model of context (only showing the last few interactions) or drown it In context (dumping entire histories). Neither works well. Be intentional with the information or context you provide to AI.

Building with Common Tasks (Hierarchy)

Here's what seems like a paradox but makes total sense. As AI models get larger and more general purpose, they often get dumber at specific tasks. Remember all the fuss about Large Language Models not being able to spell strawberry. Why? Because forcing one model to do everything is like hiring one person to be the cleaner, cook, and CEO simultaneously. They might manage, but it might make it harder for them to excel. And if it can help extract information well, why would I care about it not being able to spell strawberry?

We can adopt the same mindset when solving a problem with AI. Treating the problem like a monolithic thing to tackle and just passing it to a huge AI model is usually not the most efficient or effective. Breaking down the problem into common tasks, each handled by the right AI model, often outperforms throwing everything at a monolithic generalist. This was the focus of my series of notes on Thinking in AI.

Applying these ideas to real world problems

Ask yourself:

Are we capturing what our data means, or just what it says?

Does our AI know what context matters for each decision?

Have we broken our problem into the right levels, or are we forcing one tool to work at every scale?

Where are we using brute force when we could use structure?

What relationships in our data remain hidden because we're not looking at the right level of abstraction?

These aren't independent ideas. They work together.

Consider using AI for customer service:

Representation: Learn the meaning behind customer issues.

Attention: Focus on the right context (recent and relevant)

Hierarchy: Instead of one big general purpose AI model, use different models for different tasks - intent detection, sentiment analysis, and response generation

Your Next Step: Pick your most frustrating AI use case. Ask:

Is it failing because the AI doesn't understand meaning? → Focus on representation

Is it overwhelmed by irrelevant information? → Fix how much information you are making it attend to

Is one model trying to do too much? → Break it into hierarchical tasks

No revolution. No job apocalypse. No killer robots. Just better tools used more intelligently and judiciously.

One last point for you to Google or ask a Large Language Model yourself. Even after all these advances with Large Language Models in the last 2-3 years, guess what still works best for tabular data or time series data most of the time, and how old such AI are?

Word2Vec - https://arxiv.org/pdf/1301.3781

Persona Vectors - https://arxiv.org/pdf/2507.21509

Attention is All You Need - https://arxiv.org/pdf/1706.03762

Gemma 3 Technical Report - https://arxiv.org/pdf/2503.19786

Hinton on Part-Whole Hierarchies - https://arxiv.org/pdf/2102.12627

Hierarchical Reasoning Model - https://arxiv.org/pdf/2506.21734

Bit of a tangent, but this connects to something interesting about how AI models handle meaning.

Current LLMs encode "concepts" and their relationships into massive, complex numerical matrices. Despite all this complexity, these encodings still somewhat represent humanly understandable ideas - words, phrases, and how they connect to each other.

But there's emerging research on AI models that use latent space reasoning, where things get much more opaque. These models might achieve better reasoning and performance precisely because they're "freed" from working within natural language constraints. They can operate in higher-dimensional mathematical spaces that our brains simply can't process.

The trade-off is significant: we might get more capable AI, but the models' inner workings would hold even less semantic meaning for humans. Their "thought" processes would become essentially alien to us.

What are your thoughts on this direction in AI research? The interpretability challenges seem both fascinating and concerning.

This to me is similar how a neural network might generate great predictions but ultimately a simpler multivariate regression produce results that is easier to explain ends up being more "trustable" for stakeholders