Thinking in Attention

The Many Ways One Can Use Attention in AI

In this note, I am going to undertake the challenge of explaining my journey of using attention in my research, across 8 phases:

Basic attention: Weighted averaging

Positional: Where and when

Multimodal: Fusing different modalities

Hierarchical: Global vs. local information

Knowledge-guided: Start with what’s known

Graph-guided: Let relationships guide attention

Dynamic: Adapt to changing patterns

Concept-based: Make attention interpretable

It’s essentially my dissertation, compressed from 300 pages to 3 pages.

At its core, attention is just a way of weighting things dynamically.

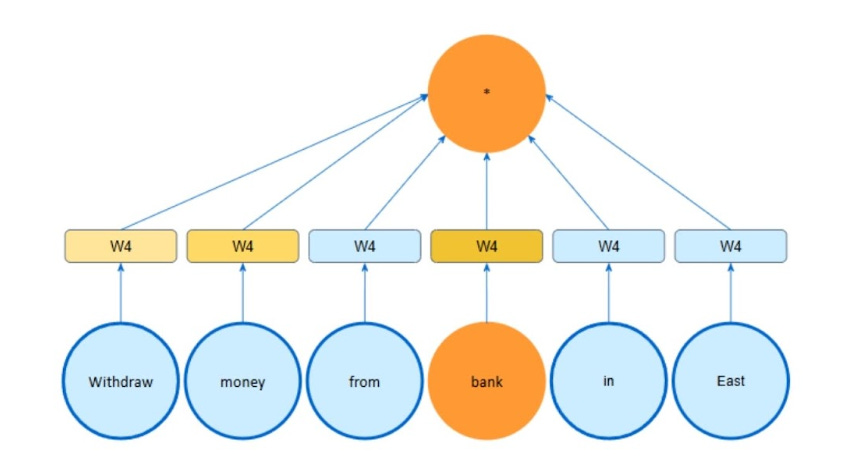

1. Basic Attention

You’re at a dinner party.

Conversations around the table. Someone mentions your name across the table. Your best friend is beside you. A stranger is telling a joke at the end of the table. You can’t listen to everything at once. So you focus on the person in front of you while staying vaguely aware of the broader discussions.

This selective focus is attention. Your brain is paying different levels of attention to different things based on relevance: background noise (low relevance), current conversation (medium relevance), whoever said your name (high relevance). You assign different scores to each. Current speaker: 7, someone saying your name: 10, background chatter: 1. You normalise them so they add up to 100%, and then weight your attention based on the resulting percentages.

This is attention, simplified. Score what matters, normalise, then use weights to pay attention accordingly.

2. Positional Attention

Attention is position-blind by default. But what was said first and what was mentioned last matters. For temporal data like stock prices, this is critical. One needs to capture sequential patterns - trends, seasons, cycles.

The solution is simple. Add position information directly to the attention weights. Give each position a unique fingerprint, or even a set of fingerprints.

3. Multimodal Attention

Combining different types of information: photos, text, prices. Which matters most?

Depends on context. Romantic dinner? Photos 50%, reviews 33%, price 17%.

Work lunch? Price 64%, reviews 22%, photos 14%.

Different data types need different attention weights. The model learns to weight based on what you’re trying to achieve.

4. Hierarchical Attention

Working with financial data, I discovered different entities may care about different scales of information.

When the Federal Reserve raises interest rates: banks care intensely (this affects their entire business model), retailers care somewhat (affects financing costs slightly). When a local store has a sales spike: retailers care intensely (local performance is key), banks care somewhat (one store doesn’t move the needle).

The solution was to process global and local information separately, then let each entity learn its own mix. Banks learned to focus 80% on global macro trends, 20% on local details. Retailers learned the opposite: 30% global, 70% local.

5. Knowledge-Guided Attention

Training models from scratch wastes time and effort. Why not start from pre-existing knowledge, like what is in Wikipedia. Use existing weights that already capture relationships and use it to guide attention. Then learn the task-specific refinements.

Training is faster. Performance better. It’s like arriving at a networking session already knowing who works on what. You can immediately focus on relevant people instead of being a social butterfly.

6. Graph-Guided Attention

Relationships carry information. Tesla announces a battery breakthrough. Should every company care equally? No. Information should flow through the network of relationships, like conversations spreading through social circles at a party.

You can use attention to learn such relationships.

7. Dynamic Attention

The world is not static. It evolves. And so should attention.

Say you use attention to model the relationship between Tesla and Panasonic. They have a strong partnership in January (supply contract), which weakens in June (demand wanes), which strengthens again in December (demand surge). Attention weights should reflect this changing reality - which can be explicit (observable events), or implicit (undercurrents).

8. Concept-Based Attention

Raw attention weights are uninterpretable. Learn concepts, and apply attention on the concepts rather than raw features. Now attention weights have semantic meaning. At the dinner party, “I was paying attention to people discussing the project (60%), some attention to launch timelines (25%), a bit to team dynamics (15%)” vs “I was paying 0.35 attention to person #7, 0.22 to person #13.” One is interpretable, the other isn’t.

Putting It Together

The power isn’t in any single phase. It’s in composing them for specific problems.

For example, for financial forecasting, I stack them: positional encoding for temporal patterns, multimodal attention to combine prices and news, graph-guided attention for company relationships, hierarchical attention separating global and local scales, knowledge-guided using pretrained embeddings, dynamic attention for evolving partnerships, concept-based attention for interpretability.

If you think about it in the abstract - attention is a way of thinking.

Thinking means you don’t process everything or do everything. It’s for knowing what matters, when it matters, and why it matters.

#ThinkingAI #AIFundamentals #GenerativeAI #AIReflections

https://ink.library.smu.edu.sg/etd_coll/514/ ← The unreadable 300+ page version of my dissertation, if you’re feeling masochistic.”