On the Units of AI Governance

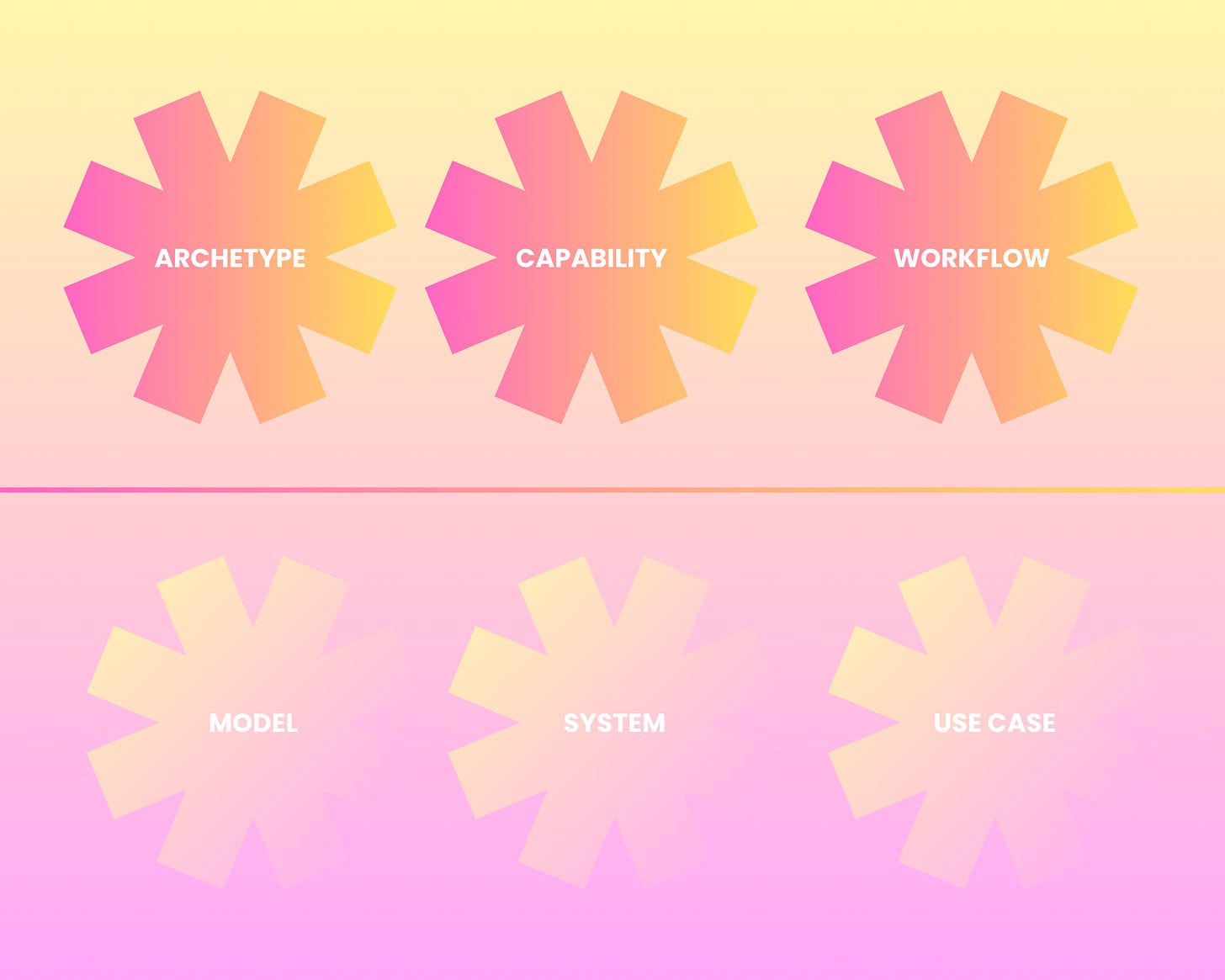

From models, systems and use cases to archetypes, capabilities and workflows.

There’s been a slow realization. Not about life. But a more boring one about AI risk.

I’ve been doing a number of talks on the intersection of two disciplines.

First discipline. Model risk management. My model risk supervision days.

Second discipline. Computer science. AI models. My ill-advised side quest to get a PhD in computer science at 42.

The MAS AI risk management guidelines I wrote from these covers 3 units of governance that relate to these disciplines - model, system, use case. They form a good foundation. But I feel like something has shifted.

1️⃣ Model - Obviously the domain of model risk management (MRM). The world of the late SR 11-7 & the new SR 26-2 from the US. As well as the UK’s SS 1/23 and Canada’s E23. What OpenAI, Anthropic etc. release and spend shitloads of money on.

2️⃣ System - The domain of technology risk management. Too many frameworks here to list. But the recent “AI” vulnerabilities that have been in the news, from Anthropic’s troubles to Vercel have nothing to do with the AI model, but the fragility of systems in this rush to do “AI”.

3️⃣ Use Case · What AI risk management focuses on. Anchored by NIST AI RMF, ISO 42001. MAS’ AI risk management guidelines. This is closest to what we experience when we use AI, but is also the least scalable.

And above the line.

4️⃣ Archetype. Prior to Agentic AI, I already saw folks grouping Generative AI into archetypes - question and answer, summarization, retrieval augmented generation. LLMs are general purpose tools, but grouping them helps with scalability. For once stereotyping things helps.

5️⃣ Capability. Bounded class of actions with authority, constraints, evidence requirements. Capabilities extend the idea of archetypes to the world of Agentic AI. Where we decompose and compose what AI agents do. See the paper on Agentic MRM by Lukasz Szpruch, Agus Sudjianto, Tanveer Bhatti and me on this; and the Agentic Risk and Capabilities (ARC framework) by Shaun Khoo and Roy Ka-Wei Lee.

6️⃣ Workflow. I know terms like trajectories and runtime have come into vogue (runtime is the term we use in the title of the Agentic MRM paper). But I feel like they are manifestations of what happens when AI agents do something, rather than a unit of governance. Workflows seem closer to something we can govern. And they relate nicely to the idea of the action space x autonomy perspective of AI agents (see Singapore’s Model Governance Framework on Agentic AI). As you relax the constraints of workflows, you get trajectories and runtimes that can go wider and wilder.

The three units above the line represent a new set of risks. But somehow I feel they also represent a new route to scalability.

Models, systems and use cases are usually specific. Whereas archetypes, capabilities and workflows can be generalized across a range of applications.

Just thinking out loud. Let me know your perspectives. I’ll try to write more about this in the weeks ahead.

#AIGovernance #AgenticAI