Models

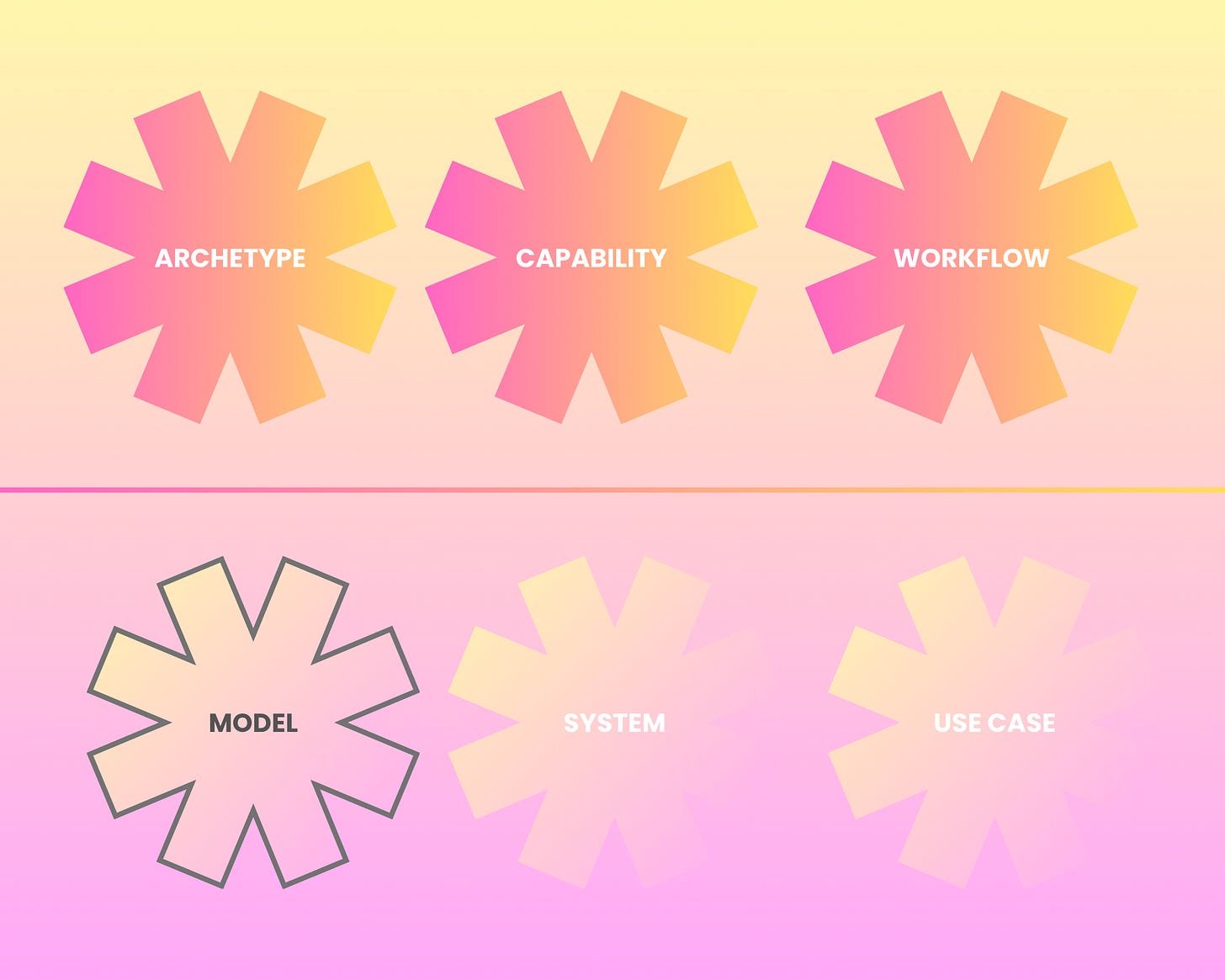

From models, systems and use cases to archetypes, capabilities and workflows.

Picking up from last week’s thought piece on the spectrum from models, systems and use cases to archetypes, capabilities and workflows.

Starting with models. My favourite.

The first models I ever met were simple curves. Bootstrapping zero rates off swap quotes in a Masters of Financial Engineering classroom. Then the more sophisticated cousins, Black-Scholes, SABR, Hull-White, Heston. I still remember the books by Emanuel Derman, Massimo Morini, and many others. For many years, the SR 11-7 was the only guidance governing this world. Though you could see snippets of it in parts of the Basel capital rules. To be honest, I was not a great student and was confused about such quantitative models for many years.

Then I met machine learning models. Decision trees. Random forests. Gradient boosted trees. Support vector machines. Then deep learning models. RNNs, CNNs. Still models, but slightly more generalized. To be honest, it was only when I was learning machine and deep learning that I finally understood the quantitative models I learnt earlier. Same for LLMs. And agents are just systems wrapped around LLMs.

Once you see the main parts - the selected function, the objective, the optimization method, and the evaluation method - you realize that they ain’t very different. Asking questions about any model ultimately comes down to these parts, and the assumptions underlying the model.

And it’s the AI model that ultimately makes an AI system different from any other software system. It’s also why in 2024, we did a review of AI model risk management in banks, when I was still in MAS. If there was any place to start, looking at the models was as good as any.

And it’s where the 3 ‘U’s arise.

Uncertainty. All models have it. Irreducible randomness you can’t eliminate. Reducible gaps you can close with more data. But always some left.

Unexpectedness. More complex models behave in ways nobody designed. Emergent capabilities. Adversarial vulnerabilities. Gaming the system. Misalignment.

Unexplainability. The degree to which we can explain a decision varies. Transparency, explainability, interpretability, but ultimately about understanding.

The Fed, OCC and FDIC dropped SR 26-2 on April 17. Replaced SR 11-7 after fifteen years. Buried in it, a single-sentence carve-out: ‘GenAI and agentic AI are explicitly excluded from scope.

For me personally, given the connections between models, whether quantitative or LLM, this exclusion puzzles me a little.

What do you think?