Learning risk management again, because of AI

A FIX NextGen meeting at BlackRock. Folks who live and breathe the markets. And then there’s me.

I started by owning up. “I am a bureaucrat.” But shared my random walk. From engineering to art policy, Basel regulation to investment risk, a PhD in AI, then AI risk supervision. Messy life. Messy research. I wasn’t sure what they would be interested in, so I just laid it all out.

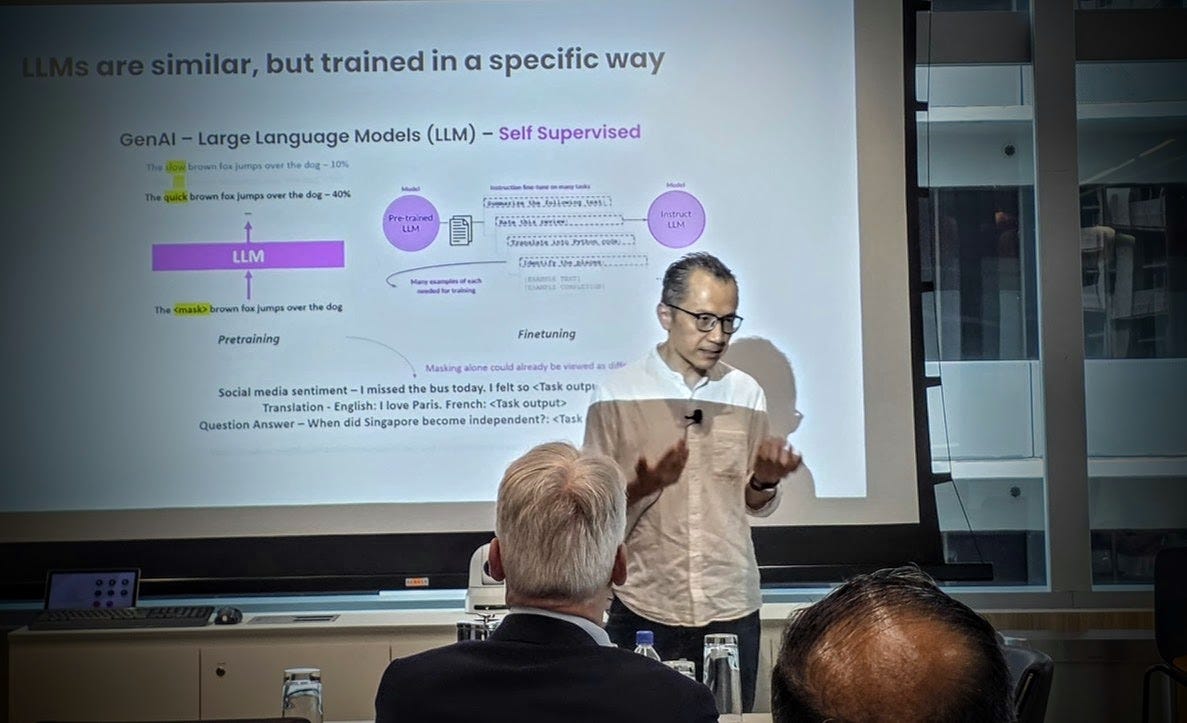

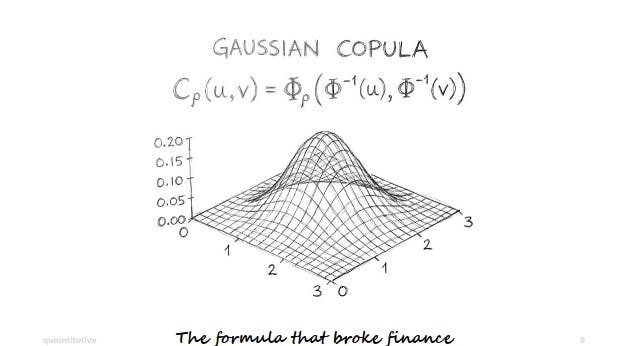

As for the talk, I took them on a journey. From the Gaussian copula that contributed to the GFC, to the questions we’re tackling now with large language models.

That line (from the GFC to AI today) may seem strange. But it is a very clear one.

The Formula That Broke Finance

A single model. The single factor Gaussian copula. Elegant, tractable, widely adopted for pricing CDOs. But totally unrealistic. In fact, I would say the decision to use it to model CDOs was bordering on criminal.

But even then, the GFC would not have happened if not for the fact that nobody was watching these complex things, nobody fully understood them, and nobody was clearly accountable.

In the aftermath: US’ SR 11-7. Model risk management requirements. The predecessor of UK’s SS 1/23 and Canada’s E-23. Singapore’s AI Risk Management Guidelines (AIRG) also shares a lot of with these guidelines. I wrote that last piece.

Complexity Squared

Here’s how the problem has changed.

2008: One model. Too simple. Trillions at stake. Nobody understood it.

2025: Way more than one model. Complexity². Everyone has an opinion.

Different. But also recognizable.

That’s the risk. Things look different enough to seem like a new problem. They’re not entirely. But the new parts matter.

3 U’s

What’s actually new - or at least amplified - in AI models.

Uncertainty. All models have it. How confident is the model in its output? Two flavors: irreducible - natural randomness you can’t eliminate. Reducible - gaps in knowledge that more data or better models can close.

Unexpectedness. Some AI models exhibit behaviors nobody designed. Emergent capabilities. Gaming the system. Adversarial vulnerabilities. Hidden biases. Misalignment.

Unexplainability. The degree to which we can explain AI decisions varies. Transparency, explainability, interpretability - not the same thing, and even combined, they don’t guarantee understanding.

These three make AI a harder risk management problem. Not a different one. Harder.

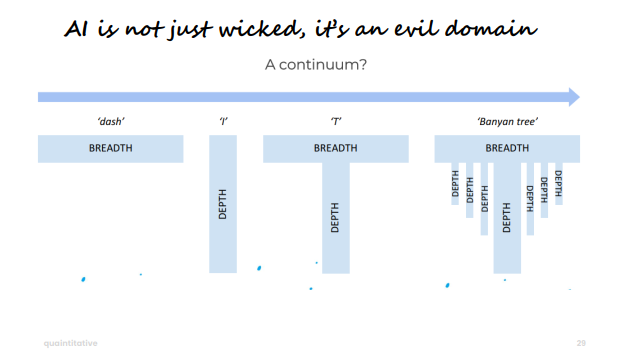

The Wicked Domain (and Then Some)

I used a framing from Epstein’s Range toward the end.

Kind domains - chess, music - have clear feedback. Deliberate practice compounds. The 10,000-hour idea in Outliers by Malcolm Gladwell works there. Depth helps here.

Wicked domains - medicine, finance - have delayed feedback, unclear rules, expertise that doesn’t transfer cleanly. Breadth sometimes helps here.

AI risk management sits firmly in wicked territory. But I think it might be something worse. An evil domain. The feedback is ambiguous, the rules keep shifting, and the expertise required keeps branching.

Not I-shaped for depth. Not T-shaped for breadth plus a bit of depth. More like a banyan tree. Multiple deep roots spreading from the same trunk. Depth across AI, governance, legal, model, technology, human factors - requiring real depth in each.

Same Questions, New Complexity

Across my messy career - Basel policy, quant model risk, investment risk, AI supervision - three questions kept reappearing.

What’s at risk? You can’t manage what you haven’t found. This means discovering where AI exists in your institution and profiling how risky each system actually is.

How do we manage it? Once you know what’s at risk, you need controls in the right places, and ways to check if those controls are working.

Who’s accountable? Controls without owners become theatre. Someone has to own the risk, and the organization needs capability to sustain it.

Basically - find it, control it, own it.

As I wrote before. These aren’t new questions. They’re the same questions risk management in finance has been asking for decades. SR 11-7 answered them for model risk. AIRG is answering them again, in a harder context.

Normal technology. Normal systems. Normal risk questions.

At the end of the session there were some useful questions. My answers to most of those are in FIX’s post here.

But there was one that stuck with me. About how real Citrini’s dystopian AI narrative was. I wrote about it last week here. But more thoughts came to mind because of the question. Will do another post in greater detail later.

#AI #AIRiskManagement #ModelRisk #FIXTradingCommunity #FinTech #NextGen