Introduction to Thinking in AI (and Generative AI)

Subscribe to this series on LinkedIn or Substack

Subscribe to this series on LinkedIn or Substack

Aside from conducting research at the intersection of graph neural networks and time series deep learning models, my other interest during my PHD studies was exploring novel tasks that such AI models could be applied to.

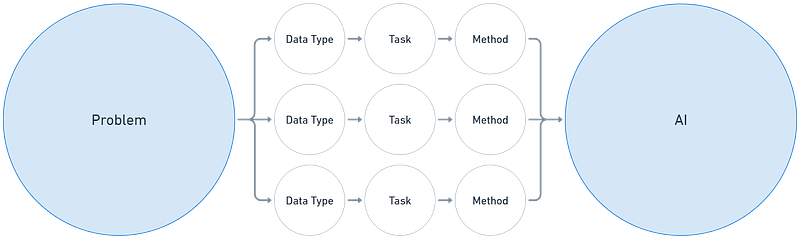

The most important lesson I learnt from these explorations was that being able to understand, frame, and break down complex problems into well-defined tasks in AI was often more important than understanding and deconstructing the latest and newest AI model. I loosely refer to this way of approaching AI as thinking in AI. Thinking in AI is different from the usual approaches of thinking of how to solve problems without AI because it requires a deeper understanding of different data types, tasks and models in AI.

It’s been a while since I graduated and moved on to real world problems outside of academia. Hence I thought it would be a good time to reflect on this topic by writing a series of short notes focused on thinking in AI. I have also done a few talks on this topic last year (slides here) that should give a rough idea of what I mean, but I will extend and strengthen some of these ideas in this series. To be clear, most of the concepts in this series are not new, especially to AI researchers or practitioners, but I hope articulating the way I think of them might be useful to readers who are not as familiar with AI.

What motivated this?

But first, some background. Generative AI came to the fore when I was already starting to compile my publications for my final dissertation at the end of 2022. Interestingly, I had started my PHD (Computer Science) journey 3 years earlier because of a deep fascination with Generative Adversarial Networks (GANs). GANs were regarded as cutting edge AI models for image generation then. My intent was to research GANs, but after a series of failed experiments, I quickly pivoted to the intersection of graph neural networks and time series deep learning models.

At the end of 2022, I was not just fascinated again with generative models, but also felt that I had dodged a bullet (or two). GANs had been mostly supplanted by diffusion models (e.g., Stable Diffusion). Publishing research papers in computer science venues was already extremely competitive, and the hype around Generative AI would most certainly supercharge this. The sea change also made many of us question the relevance of our non-Generative AI research.

But I still recall my (infinitely wise) supervisor remarking that the greatest value from the PHD training was not understanding the latest or best models or techniques, but how to frame problems as tasks in a way that would allow us to effectively solve them.

This piece of wisdom gets to the heart of what I loosely refer to as thinking in AI.

Thinking in AI when tackling problems

So how can this be applied to solving problems?

Imagine facing a common challenge — needing to extract meaningful insights or take action based on a large volume of text information.

Such a problem can manifest in a multitude of forms across different roles:

an analyst trying to make sense of the latest developments in the news;

a researcher trying to synthesize vast amounts of academic literature for a review;

a legal professional sifting through documents to find relevant cases;

a marketing manager tracking brand sentiment in online conversations and reviews; or

a customer service executive needing to respond to customer feedback.

As you can see, the possibilities are endless.

We could even take it further. For example, the customer service executive could be trying to respond to customer feedback with information that is not just textual in nature, but information in the form of:

Tabular data, if customer information and case information is stored on spreadsheets;

Visual data, if the feedback included photos or videos of products;

Network data, if there is a link to trace relationships between different customers and products; or

Time series data, if there is a need to understand trends or patterns across time in the customer information.

There are many other types of data, for example 3D data, or we could even combine, say network and time series data to get a series of networks that evolve across time (incidentally the focus of most of my PHD research). You probably get the idea.

One could say. Let’s just throw it all into the latest Large Language Model (LLM). Or even a multimodal LLM. And then when it does not work, or the result is hallucinated, throw up our hands in dismay and say that the technology is still immature and risky. Or even when it does work, some folks would then proclaim that there are still risks as we can’t explain the result.

One could also approach this as a unique problem, and start focusing on bespoke solutions that are designed for this specific problem.

Neither approach may be optimal.

AI and tasks

If we think about the problem from the perspective of tasks, and how these tasks relate to what different AI models (not just LLMs or Generative AI) can do, then the nature of the problem becomes quite different.

Take the customer service executive’s problem again as an example.

Should he or she dump all the text information into an LLM, or attempt to read and understand all of them? Neither approach focuses on the actual goal. The task is rarely just getting through the large volume of text, or even simply summarising them. Even summaries are often just a means to an end.

Instead, there is usually an underlying task or a series of underlying tasks that can be used to solve each problem. For instance, if we want to:

understand the general sentiment of customer feedback, then the task would be sentiment analysis.

extract key information relating to product orders or user details, then the task would be named entity recognition or information extraction.

identify the main topics or categories being discussed in the feedback then the task would be text classification or topic modeling.

find similar issues or feedback from the past, then the task would be information retrieval or semantic search.

detect emerging patterns in feedback over time, then the task would involve trend analysis;

spot outliers in customer feedback patterns then the task would be anomaly detection.

A problem may often involve a series of tasks. For example, fully addressing a complex complaint might involve classifying the issue, extracting specific details, retrieving related information from another database, analysing sentiment, and finally generating a response that synthesises all the information gathered.

Benefits of thinking in AI

Thinking of AI in this way helps in the following ways:

Clarity: It forces a sharper, more precise understanding of the actual problem being solved.

Reality check: It prompts critical evaluation of whether AI is truly necessary, or if a simpler heuristic, traditional method, or even human intervention is more appropriate or efficient.

Tool selection: When AI is needed, defining the specific task helps determine the right tool — perhaps a targeted, efficient machine learning model trained for classification or extraction is better suited than a large, general-purpose LLM.

Better evaluation: It enables more meaningful evaluation. Assessing the accuracy of a sentiment score is different from measuring the relevance of search results or the coherence of generated text; task definition clarifies the relevant metrics.

Explainability: By breaking down a complex process into discrete tasks, it can improve our ability to understand and potentially explain both intermediate steps and the final result.

What this series will cover

By learning how to think in AI, we gain a set of mental models to more effectively use AI, and have more realistic expectations on what we can achieve.

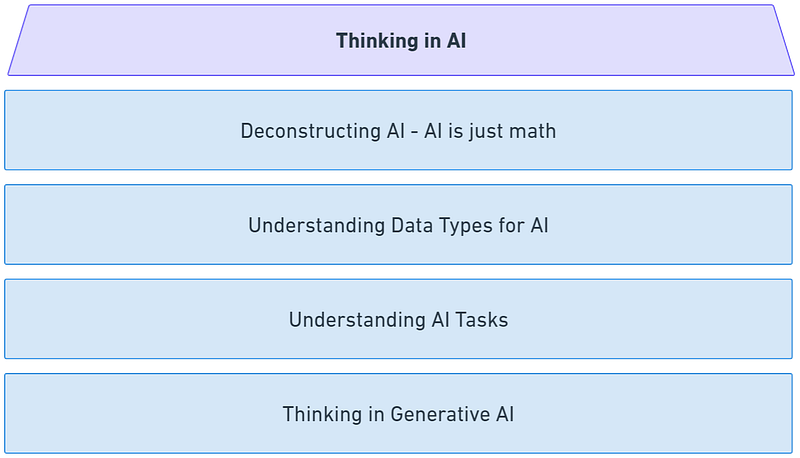

In this upcoming series of short notes -

We will first explore how an AI model is just math, a short note on understanding the core of what AI (and Generative AI) is.

We will then delve deeper into how thinking in AI is really about understanding data types and tasks, that focuses on how to frame problems through the lens of data types and tasks in AI.

And finally, we end the series by applying these perspectives to thinking in (Generative) AI, including perspectives on data types, tasks and evaluation of different groupings of Generative AI tasks or archetypes.