AI Agents as Normal Systems

If AI is normal technology, then AI agents are perhaps … just normal systems.

If AI is normal technology, then AI agents are perhaps … just normal systems.

Arvind Narayanan and Sayash Kapoor articulated a compelling thesis on “AI as Normal Technology” a while back. Their core argument: AI can be understood through the lens of past general-purpose technologies, electricity, the internet, computing, rather than as a potential super intelligent entity.

So if AI is normal technology, then perhaps AI agents are just normal systems. Not entities that can turn rogue, deceive, or collude.

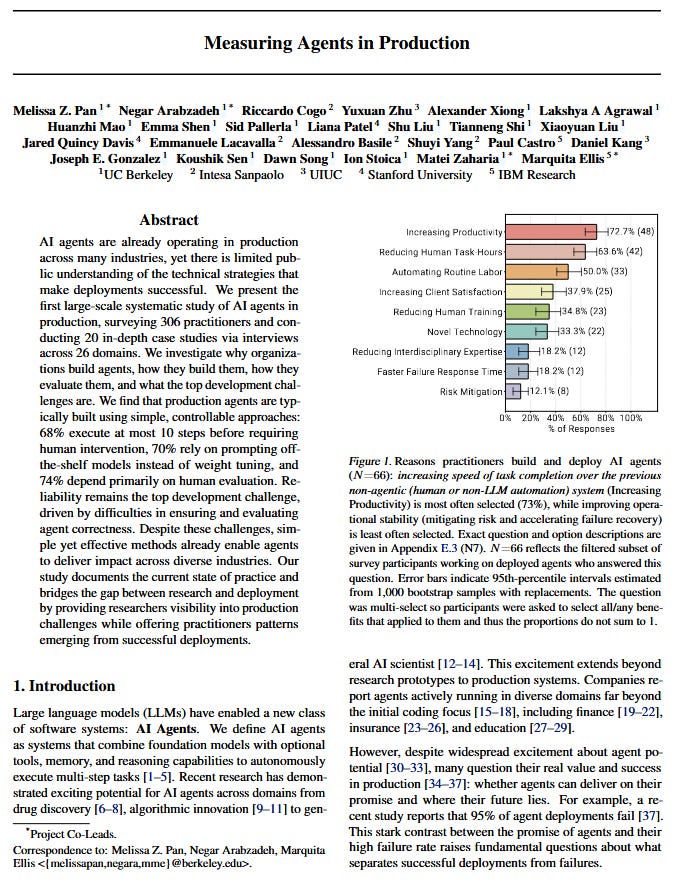

A recent paper “Measuring Agents in Production” provides some evidence. The paper is based on a study of AI agents in production, surveying 306 practitioners and conducting 20 in-depth case studies.

Some thoughts.

1️⃣ Just like systems, specification is the core work.

Points from paper:

- “Production agents favor well-scoped, static workflows ..”

- “Organizations deliberately bound agent behavior within specific action spaces ...”

- “Deployment architectures favor predefined, structured workflows over open-ended autonomous planning to ensure reliability.”

My take: Thinking that one can simply unleash AI agents on a problem and solve it is pure fantasy. Before a problem can be tackled by an AI agent, someone still has to do the specs, decompose the problem into clear tasks, scope the action space, think about orchestration, design the human handoffs, and define the success criteria for the system to work.

2️⃣ Just like systems, trust and reversibility matter more than capabilities.

Points from paper:

- “Practitioners deliberately trade-off additional agent capability for production reliability... reliability concerns drive practitioners toward simple yet effective solutions with high controllability.”

- “... teams restrict agents to ‘read-only’ operations to prevent state modification … but leaves the final execution to human engineers.”

My take: No matter how capable AI agents become, organizations will adopt them at the speed they can learn to trust them. And the need for trust scales with irreversibility of actions (reading an email is vastly different from executing a trade).

3️⃣ Just like systems, risk arises from gaps in development and deployment, not AI going amok.

Points from paper:

- “Reliability remains the top development challenge, driven by difficulties in ensuring and evaluating agent correctness.”

- “Agent behavior breaks traditional software testing... teams have not yet identified effective methods to adapt … tests for nondeterministic agent behavior.”

My take: The real concerns aren’t scary but unrealistic scenarios - runaway, deceptive, or collusive agents. It’s the gaps in development and deployment practices for such complex systems that require attention. These are engineering and risk management problems, not AI going amok.

So, normal technology, normal systems, for normal problems. Not easy or trivial. But normal.

What’s your take? Normal or abnormal?

#AIRiskManagement #AgenticAI #AIAgents #NormalAI