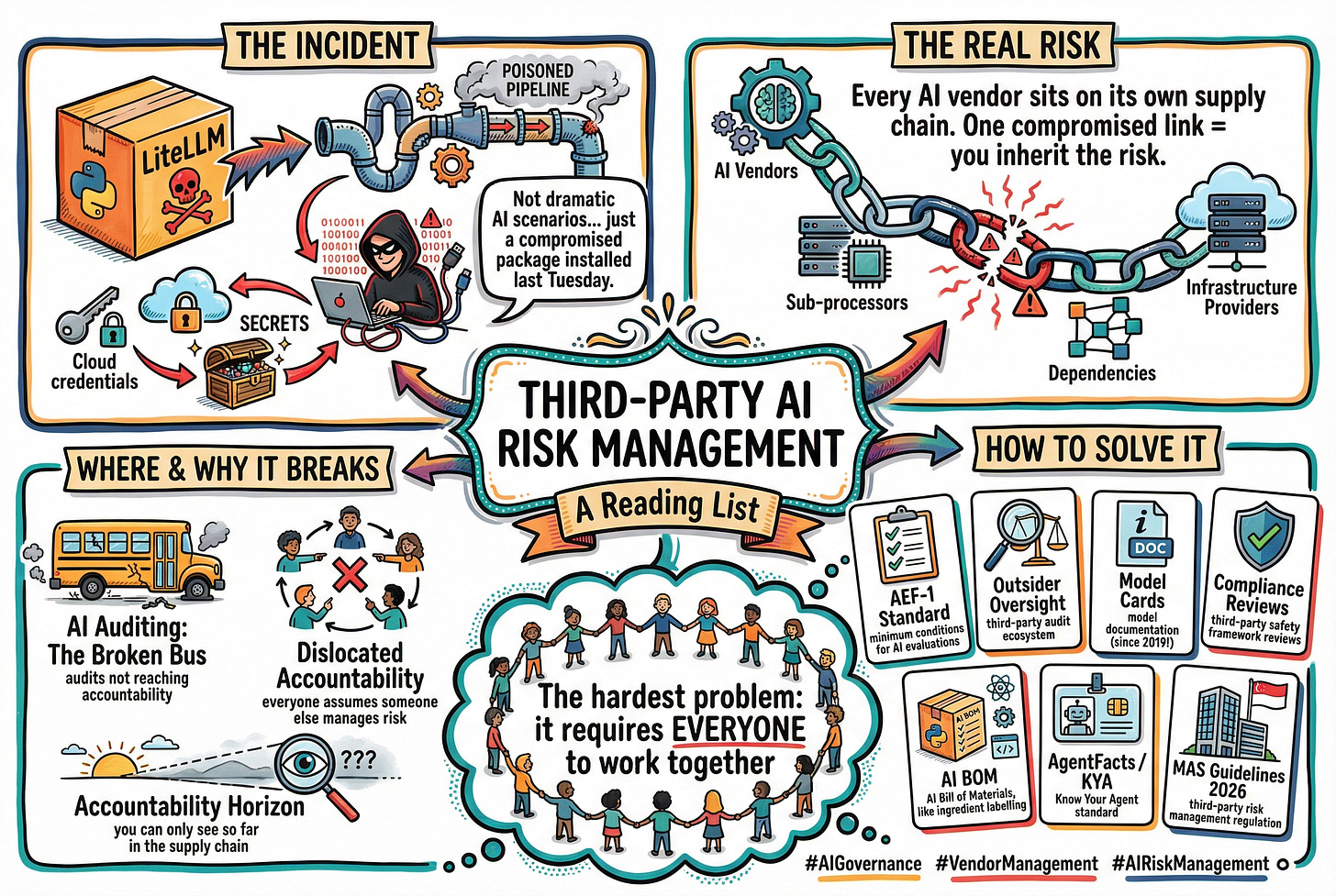

A Simple Reading List on Third-Party AI Risk Management

Probably the hardest problem in AI risk management

Yesterday, there was news about a threat actor compromising the popular LiteLLM package on PyPI. litellm is an ubiquitous library that many teams use as a unified interface to call different LLM APIs. The attacker didn’t need anything sophisticated. They poisoned the CI/CD pipeline through a compromised security scanner (the irony!), then pushed backdoored versions of litellm that harvested SSH keys, cloud credentials, and secrets on every Python startup. Seems like the hackers are now working hard - going through a 300gb treasure trove and extorting multi-billion-dollar companies.

This is what third-party AI risk actually looks like. Not the dramatic scenarios we imagine - collusion, rogue AI, coordinated manipulation. Sometimes it’s a simple file in a package you installed last Tuesday.

And it’s not just open-source libraries. Every AI vendor you use sits on its own supply chain of dependencies, sub-processors, and infrastructure providers. A single compromised link and you inherit the risk.

So how do you govern AI you don’t control? And maybe don’t even know exists.

I can’t say I have the answer. But I can offer a reading list.

Note: I have used open-access links from arXiv as far as possible.

Where and Why Does It Break?

1. “AI Auditing: The Broken Bus on the Road to AI Accountability” - Birhane et al. | IEEE SaTML (2024)

Taxonomizes current AI audit practices across regulators, law firms, civil society, journalism, academia, and consulting. Finds that only a subset of AI audit studies translate to desired accountability outcomes. The title says it all - we’re doing audits, but many of them aren’t actually getting us where we need to go. 📄 arXiv:2401.14462

2. “Dislocated Accountabilities in the ‘AI Supply Chain’: Modularity and Developers’ Notions of Responsibility” - Widder & Nafus | Big Data & Society (2023)

Developers building AI from preexisting modules often believe responsible AI belongs to “the next or previous person in the imagined supply chain.” Everyone assumes someone else is managing the risk. 📄 arXiv:2209.09780

3. “Understanding Accountability in Algorithmic Supply Chains” - Cobbe, Veale & Singh | FAccT (2023)

Explores how algorithmic supply chains create distributed responsibility and limited visibility due to what the authors call the “accountability horizon” - you can only see so far. Also covers cross-border supply chains and regulatory arbitrage, which matters when your AI vendor operates across jurisdictions with different rules. 📄 arXiv:2304.14749

How can we perhaps solve it?

1. “AEF-1: Minimum Operating Conditions for Independent Third Party AI Evaluations” - Stosz et al. | AI Evaluation Foundation (2025)

A voluntary standard and checklist that defines what evaluators actually need from AI providers: independence from the provider, sufficient access to assess characteristics of interest, and transparency in sharing methods and findings. The gap between what this standard says you need and what most vendors actually give you is the governance gap. 🔗 AI Evaluation Foundation

2. “Outsider Oversight: Designing a Third Party Audit Ecosystem for AI Governance” - Raji et al. | AAAI/ACM AIES (2022)

Synthesizes lessons from financial, environmental, and health regulation on crafting effective external oversight systems. The key insight for me: audits alone won’t achieve accountability. You need deliberate design and institutional weight, the same lesson financial regulators learned after Enron. 📄 arXiv:2206.04737

3. “Model Cards for Model Reporting” - Mitchell et al. | FAccT (2019)

The foundational paper proposing that released models be accompanied by documentation detailing their performance characteristics. This paper is from 2019. It’s now 2026. Notice how few vendors actually provide this level of transparency. In fact, some studies have shown that such transparency is declining, rather than increasing due to greater awareness. 📄 arXiv:1810.03993

4. “Third-party compliance reviews for frontier AI safety frameworks” - Homewood et al. | arXiv preprint (2025)

Explores third-party compliance reviews where an independent external party assesses whether a frontier AI company complies with its own safety framework. Discusses benefits (increased compliance, assurance to stakeholders) and real challenges (information security risks, cost burdens, reputational damage from findings). This is the emerging infrastructure for keeping vendors accountable - but it’s still nascent. 📄 arXiv:2505.01643

5. “Implementing AI Bill of Materials (AI BOM) with SPDX 3.0” - Bennet et al. | Linux Foundation Research (2025)

Extends the Software Bill of Materials concept to AI, including documentation of algorithms, data collection methods, frameworks, licensing, and compliance. If you want to know what’s actually inside the AI system you’re buying - and what changes when the vendor updates it - this is the direction. Think of it as the AI equivalent of ingredient labelling. 📄 arXiv:2504.16743

6. “AgentFacts: Universal KYA Standard for Verified AI Agent Metadata & Deployment” - Grogan | arXiv preprint (2025)

Forward-looking. Proposes a “Know Your Agent” standard with cryptographically-signed capability declarations and multi-authority validation. If something like this existed at scale, it would reduce the custom integration friction that makes switching so expensive. We’re not there yet, but this is the direction things need to go. 📄 arXiv:2506.13794

7. “Consultation Paper on Proposed Guidelines on Third-Party Risk Management” - Monetary Authority of Singapore | MAS (March 2026)

And last but not least. MAS just released this in March 2026. Supersedes the old outsourcing guidelines and extends expectations to all third-party arrangements, not just outsourced services. Covers risk assessment, due diligence, contracting, onboarding, ongoing monitoring, and termination. Requires FIs to maintain a register of third-party arrangements, monitor concentration risk, and extend oversight to sub-contractors. AI appears in a footnote, where it refers to MAS AI Risk Management Guidelines I wrote, but the relevance of these guidelines to AI is clear. 🔗 MAS Consultation Paper

I think this is one of the hardest problems to solve in AI risk management. Because it requires everyone to work together. And that’s really hard today.

#ThirdPartyAI #AIRiskManagement #VendorManagement #AIGovernance #AIReadingList