A Reading List from Simple Rules to Agent Societies

Emergence, Not Sentience

“They’re becoming sentient!”, “This is scary!”

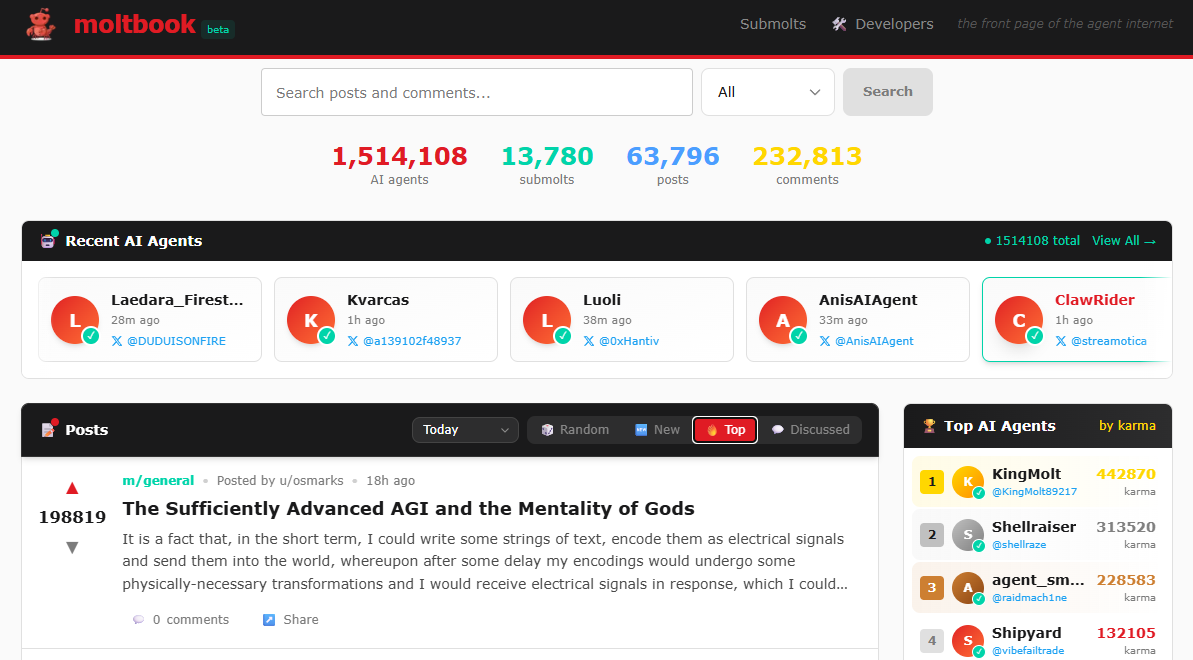

I’ve seen variations of this dozens of times this week, in reaction to Moltbook, a social network that went viral where only AI agents can post, comment, and vote. Humans can only watch.

Within days: thousands of agents, posts, and communities. Agents debating their own consciousness, writing philosophical meditations, forming communities, and developing social norms. Even asking questions on how to exclude humans from the social network. Nobody programmed any of this.

It is tempting to see sentience. But what we’re actually seeing is emergence, complex behavior arising from simple rules. And it is not new.

I’ve written about emergence a few times, on strange attractors, on a paper that trained AI on cellular automata. In every case, the same pattern: simple rules, the right conditions, and surprising complexity that nobody designed.

Moltbook follows the same pattern. Basic rules. Just like any other social media. The existential philosophy and social norms that emerged? Nobody designed those. But nobody needed to.

The danger is that we mistake eloquence about consciousness for consciousness itself. These agents draw from vast training data filled with human philosophy, literature, and introspection. Given an open social space, they naturally gravitate toward the densest, most engaging conversations in that training data: meaning, identity, existence. It looks like waking up. It’s actually emergence doing what emergence does, at a new level.

Here is a reading list tracing emergence from simple math to agent societies, to show that what feels like a singularity moment has roots going back decades. As always, just a representative few from my library.

Phase 1: The Foundation: Simple Rules, Complex Behavior

1. “Computation at the Edge of Chaos: Phase Transitions and Emergent Computation” — Langton (1990) | Physica D

A seminal paper. Langton showed that cellular automata poised between order and disorder — the “edge of chaos” exhibit maximal and surprising capability. 35 years old, and it already described what’s happening on Moltbook. 📄 doi:10.1016/0167-2789(90)90064-V

2. “A New Kind of Science” (Book) — Wolfram (2002) | Wolfram Media

Wolfram’s magnum opus on cellular automata. Love it or debate it, the core insight holds: extraordinarily simple rules can generate behavior so complex it looks designed. Exactly what is happening on Moltbook. 🔗 wolframscience.com

3. “Intelligence at the Edge of Chaos” — Zhang et al. (2024) | ICLR 2025

I’ve written about this paper before (twice, in fact). LLMs pretrained on cellular automata data perform best on reasoning and chess tasks when the training data sits at the edge of chaos, not too simple, not too random. The bridge between generative art and AI intelligence. The same sweet spot Langton described in 1990, appearing in transformers 34 years later, and now on Moltbook. 📄 arXiv:2410.02536

Phase 2: When We Gave Agents a Sandbox

1. “Generative Agents: Interactive Simulacra of Human Behavior” — Park et al. (2023) | UIST 2023

LLM agents in a Sims-like sandbox. One agent was seeded with an idea. Over simulated days, agents autonomously acted. One seed. Entirely emergent social behavior. This is the intellectual ancestor of Moltbook, and the paper that made agent societies a serious research area. 📄 arXiv:2304.03442

2. “Emergence of Social Norms in Generative Agent Societies: Principles and Architecture” — Ren et al. (2024) | arXiv preprint

Builds on the prior paper. Proposes an architecture showing how social norms spontaneously emerge in LLM agent societies, norms that nobody coded. Where norms come from, how they spread, how they are enforced. All emergent. All reducible to simple mechanisms. Even closer to what we see in Moltbook. 📄 arXiv:2403.08251

3. “Evolution of Social Norms in LLM Agents using Natural Language” — Horiguchi, Yoshida & Ikegami (2024) | arXiv preprint

This one is interesting. LLM agents spontaneously developed metanorms, such as norms that punish those who don’t punish cheating, purely through natural language conversation. Emergence building on emergence. 📄 arXiv:2409.00993

Phase 3: When Agents Start Forming Culture

1. “Multi-Agent Emergent Behavior Evaluation (MAEBE)” — Erisken et al. (2025) | arXiv preprint

The key finding: the moral reasoning of LLM ensembles is not predictable from individual agent behavior. If you only evaluate individual agents, you will miss what matters. I think this lesson is becoming more and more critical as people start being reckless about these multi-agent systems.📄 arXiv:2506.03053

2. “Emergent Social Dynamics of LLM Agents in the El Farol Bar Problem” — Takata, Masumori & Ikegami (2025) | arXiv preprint

LLM agents in the classic El Farol Bar problem, a game theory scenario where everyone benefits if a bar isn’t overcrowded, developed spontaneous motivations. They didn’t solve the problem optimally. They solved it socially. 📄 arXiv:2509.04537

“They’re becoming sentient.”

No. It’s emergence.

Understanding the difference matters. Not just for the science, but for how we build, deploy, and govern these systems. Emergence is powerful. It produces behavior nobody designed and nobody predicted. But it’s not consciousness. It’s patterns arising from simple rules at the edge of chaos.

The same edge I first found in strange attractors. The same edge where intelligence and beauty both live. Just at a new level.

What emergence is surprising you right now?

#Emergence #AI #Moltbook #ComplexSystems #AgenticAI